`

| |

|

Archive for the ‘Testing’ Category

Friday, March 23rd, 2018

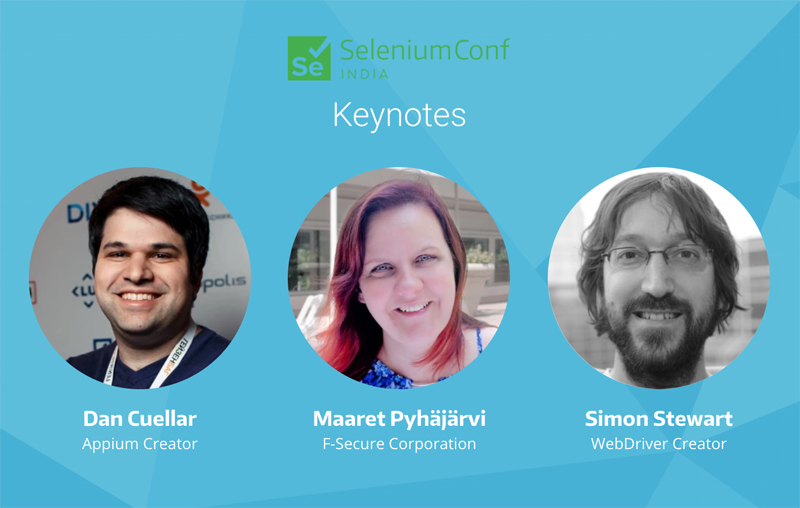

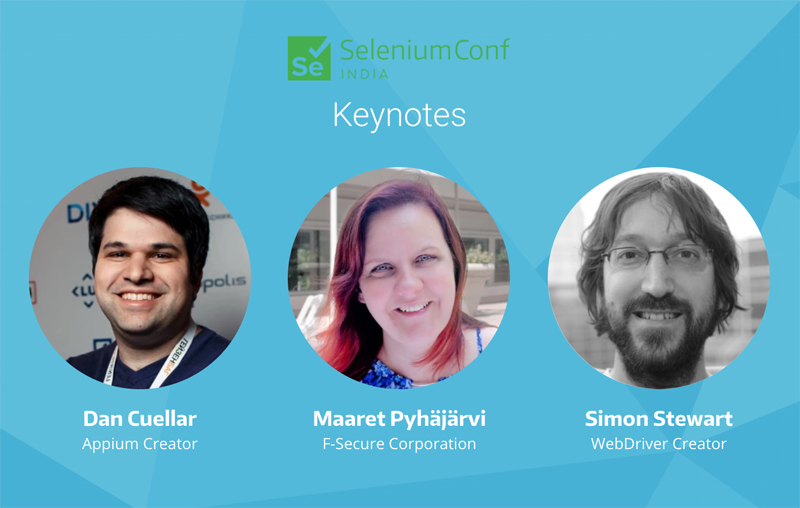

The Selenium India 2018 Conference is thrilled to announce our keynote speakers:

Program: We’ve received a total of 139 proposals via our open submissions system. Our program team is currently reviewing the proposals and we should have the first list of speakers next week.

Pre-Conf Workshop: We are happy to offer the following four Full-day deep-dive workshops on June 28th (pre-conf):

About the Conf:

The Selenium Conference is a non-profit, volunteer-run event presented by members of the Selenium Community.

The goal of the conference is to bring together Selenium developers & enthusiasts from around the world to share ideas, socialize, and work together on advancing the present and future success of the project.

Sponsors:

Selenium Conference is a great opportunity to meet and get to know hundreds of the world’s top Selenium talent. Whether you’re looking to connect with experienced developers, testers, and users of Selenium, hire automation engineers, promote your product, or just give back to the community, this is the place to be. We love helping our sponsors find innovative ways to interact with the community and achieve a great return on their investment in the conference.

Selenium Conference is organized by volunteer, passionate members of the Selenium community. We rely on corporate sponsorship to keep ticket prices low for attendees, as well as help cover the cost of the venue, food, beverages, t-shirts, and more.

Check out our sponsorship guide!

Spread the Word: If possible, we request you to print these posters and put it on your office notice board or forward it to folks in your network.

Posted in Conference, Testing | No Comments »

Thursday, June 12th, 2014

We are delighted to announce that this year we’ll be hosting the 4th annual (official) Selenium Conference in Bangalore, India. This is your golden opportunity to meet the selenium and test automation community in general.

The goal of the conference is to bring together Selenium developers & enthusiasts from around the world to share ideas, socialise, and work together on advancing the present and future success of the project.

If you are interested in presenting at the Selenium Conf, please submit your proposals at http://confengine.com/selenium-conf-2014

Registrations have already started. Register now at http://booking.agilefaqs.com/selenium-conf-2014

To know more about the conference, please visit http://seleniumconf.org

Posted in Community, Conference, Testing | No Comments »

Thursday, December 5th, 2013

I remember back in the days, before any mocking frameworks existed in Java, we used to create an anonymous-inner class of an abstract class to fake-out the abstract method’s behaviour and use the real logic of the concrete method.

This worked fine, except in cases where we had a lot of abstract methods and overriding each of those methods to do-nothing or return dummy value seemed like a complete waste of time.

With the mocking frameworks like Mockito, we have a better way to deal with such situations, esp. in legacy code. But there is a catch. Let me explain it to you via a code example.

public abstract class AbstractClazz {

public String sayHello() {

return "Hello";

}

}

public class AbstractClazzTest {

@Test

public void shouldCallRealMethods() {

AbstractClazz clazz = mock(AbstractClazz.class);

assertEquals("Hello", clazz.sayHello());

}

} |

public abstract class AbstractClazz {

public String sayHello() {

return "Hello";

}

}

public class AbstractClazzTest {

@Test

public void shouldCallRealMethods() {

AbstractClazz clazz = mock(AbstractClazz.class);

assertEquals("Hello", clazz.sayHello());

}

} This test fails, with the following error:

java.lang.AssertionError: expected:<Hello> but was:<null>

at org.junit.Assert.fail(Assert.java:91)

at org.junit.Assert.failNotEquals(Assert.java:645)

at org.junit.Assert.assertEquals(Assert.java:126)

at org.junit.Assert.assertEquals(Assert.java:145)

at com.agilefaqs.mocking.AbstractClazzTest.

shouldNotFakeRealMethods(AbstractClazzTest.java:20) |

java.lang.AssertionError: expected:<Hello> but was:<null>

at org.junit.Assert.fail(Assert.java:91)

at org.junit.Assert.failNotEquals(Assert.java:645)

at org.junit.Assert.assertEquals(Assert.java:126)

at org.junit.Assert.assertEquals(Assert.java:145)

at com.agilefaqs.mocking.AbstractClazzTest.

shouldNotFakeRealMethods(AbstractClazzTest.java:20) To make this work, We need to pass the following Answer parameter while creating the Mock:

AbstractClazz clazz = mock(AbstractClazz.class, CALLS_REAL_METHODS); |

AbstractClazz clazz = mock(AbstractClazz.class, CALLS_REAL_METHODS); Now let’s say our requirements have evolved. Our sayHello() method should also add the person’s name and greet. Different implementations will figure out different ways to fetch the person’s name.

public abstract class AbstractClazz {

public String sayHello() {

return "Hello " + fetchName() + "!";

}

protected abstract String fetchName();

}

public class AbstractClazzTest {

@Test

public void shouldCallRealMethodsAndFakeAbstractMethod() {

AbstractClazz clazz = mock(AbstractClazz.class, CALLS_REAL_METHODS);

when(clazz.fetchName()).thenReturn("Naresh");

assertEquals("Hello Naresh!", clazz.sayHello());

}

} |

public abstract class AbstractClazz {

public String sayHello() {

return "Hello " + fetchName() + "!";

}

protected abstract String fetchName();

}

public class AbstractClazzTest {

@Test

public void shouldCallRealMethodsAndFakeAbstractMethod() {

AbstractClazz clazz = mock(AbstractClazz.class, CALLS_REAL_METHODS);

when(clazz.fetchName()).thenReturn("Naresh");

assertEquals("Hello Naresh!", clazz.sayHello());

}

} The moment we run this test, we get the following error:

java.lang.AbstractMethodError:

com.agilefaqs.mocking.AbstractClazz.fetchName()Ljava/lang/String;

at com.agilefaqs.mocking.AbstractClazzTest.

shouldCallRealMethodsAndFakeAbstractMethod(AbstractClazzTest.java:22) |

java.lang.AbstractMethodError:

com.agilefaqs.mocking.AbstractClazz.fetchName()Ljava/lang/String;

at com.agilefaqs.mocking.AbstractClazzTest.

shouldCallRealMethodsAndFakeAbstractMethod(AbstractClazzTest.java:22) Basically, we need our mocking framework to give us a mock which allows partial mocking. Which means, for some methods we want the real methods to be invoked and for some, we want to use the fake implementation.

One way to implement this is by creating a default mock and then explicitly setting expectation on real methods.

@Test

public void shouldCallRealMethodsAndFakeAbstractMethod() {

AbstractClazz clazz = mock(AbstractClazz.class);

when(clazz.sayHello()).thenCallRealMethod();

when(clazz.fetchName()).thenReturn("Naresh");

assertEquals("Hello Naresh!", clazz.sayHello());

} |

@Test

public void shouldCallRealMethodsAndFakeAbstractMethod() {

AbstractClazz clazz = mock(AbstractClazz.class);

when(clazz.sayHello()).thenCallRealMethod();

when(clazz.fetchName()).thenReturn("Naresh");

assertEquals("Hello Naresh!", clazz.sayHello());

} But the main problem with this approach is that we need to ensure we set explicit expectations on all public and protected methods which might be internally called by the main method (sayHello in this case.) To make matters worse, private methods can’t be mocked and hence we can’t set expectations on them. But let’s say at a later point, if someone makes a private method protected/public, the test will fail, as it will now get mocked. Overall this strategy can make your tests extremely fragile.

For example the following works:

public abstract class AbstractClazz {

public String sayHello() {

return "Hello " + fetchName() + closingSymbol();

}

private String closingSymbol() {

return "!";

}

protected abstract String fetchName();

}

public class AbstractClazzTest {

@Test

public void shouldCallRealMethodsAndFakeAbstractMethod() {

AbstractClazz clazz = mock(AbstractClazz.class);

when(clazz.sayHello()).thenCallRealMethod();

when(clazz.fetchName()).thenReturn("Naresh");

assertEquals("Hello Naresh!", clazz.sayHello());

}

} |

public abstract class AbstractClazz {

public String sayHello() {

return "Hello " + fetchName() + closingSymbol();

}

private String closingSymbol() {

return "!";

}

protected abstract String fetchName();

}

public class AbstractClazzTest {

@Test

public void shouldCallRealMethodsAndFakeAbstractMethod() {

AbstractClazz clazz = mock(AbstractClazz.class);

when(clazz.sayHello()).thenCallRealMethod();

when(clazz.fetchName()).thenReturn("Naresh");

assertEquals("Hello Naresh!", clazz.sayHello());

}

} However if we change closingSymbol() method to protected/public, the test will fail with the following error:

org.junit.ComparisonFailure: expected:<Hello Naresh[!]> but was:<Hello Naresh[null]> |

org.junit.ComparisonFailure: expected:<Hello Naresh[!]> but was:<Hello Naresh[null]> A better approach in Mockito is to pass a Custom Answer parameter while creating the mock. Following is the Answer implementation which can do partial mocking:

public class AbstractMethodMocker implements Answer<Object> {

@Override

public Object answer(InvocationOnMock invocation) throws Throwable {

Answer<Object> answer;

if (isAbstract(invocation.getMethod().getModifiers()))

answer = RETURNS_DEFAULTS;

else

answer = CALLS_REAL_METHODS;

return answer.answer(invocation);

}

}

public class AbstractClazzTest {

@Test

public void shouldCallRealMethodsAndFakeAbstractMethod() {

AbstractClazz clazz = mock(AbstractClazz.class, new AbstractMethodMocker());

when(clazz.fetchName()).thenReturn("Naresh");

assertEquals("Hello Naresh!", clazz.sayHello());

}

} |

public class AbstractMethodMocker implements Answer<Object> {

@Override

public Object answer(InvocationOnMock invocation) throws Throwable {

Answer<Object> answer;

if (isAbstract(invocation.getMethod().getModifiers()))

answer = RETURNS_DEFAULTS;

else

answer = CALLS_REAL_METHODS;

return answer.answer(invocation);

}

}

public class AbstractClazzTest {

@Test

public void shouldCallRealMethodsAndFakeAbstractMethod() {

AbstractClazz clazz = mock(AbstractClazz.class, new AbstractMethodMocker());

when(clazz.fetchName()).thenReturn("Naresh");

assertEquals("Hello Naresh!", clazz.sayHello());

}

} If you are forced to use this technique in brand new code you are building, may I suggest the Delete button…

Posted in Agile, Java, Testing | 2 Comments »

Tuesday, March 19th, 2013

It’s easy to speak of test-driven development as if it were a single method, but there are several ways to approach it. In my experience, different approaches lead to quite different solutions.

In this hands-on workshop, with the help of some concrete examples, I’ll demonstrate the different styles and more importantly what goes into the moment of decision when a test is written? And why TDDers make certain choices. The objective of the session is not to decide which approach is best, rather to highlight various different approaches/styles of practicing test-driven development.

By the end of this session, you will understand how TTDers break down a problem before trying to solve it. Also you’ll be exposed to various strategies or techniques used by TDDers to help them write the first few tests.

Posted in Agile, Design, Programming, Testing | No Comments »

Tuesday, March 19th, 2013

As more and more companies are moving to the Cloud, they want their latest, greatest software features to be available to their users as quickly as they are built. However there are several issues blocking them from moving ahead.

One key issue is the massive amount of time it takes for someone to certify that the new feature is indeed working as expected and also to assure that the rest of the features will continuing to work. In spite of this long waiting cycle, we still cannot assure that our software will not have any issues. In fact, many times our assumptions about the user’s needs or behavior might itself be wrong. But this long testing cycle only helps us validate that our assumptions works as assumed.

How can we break out of this rut & get thin slices of our features in front of our users to validate our assumptions early?

Most software organizations today suffer from what I call, the “Inverted Testing Pyramid” problem. They spend maximum time and effort manually checking software. Some invest in automation, but mostly building slow, complex, fragile end-to-end GUI test. Very little effort is spent on building a solid foundation of unit & acceptance tests.

This over-investment in end-to-end tests is a slippery slope. Once you start on this path, you end up investing even more time & effort on testing which gives you diminishing returns.

In this session Naresh Jain will explain the key misconceptions that has lead to the inverted testing pyramid approach being massively adopted, main drawbacks of this approach and how to turn your organization around to get the right testing pyramid.

Posted in Agile, Continuous Deployment, Deployment, Testing | No Comments »

Monday, April 16th, 2012

I’m not against Manual Testing, esp. Exploratory Testing. However one needs to consider the following issues with manual testing (checking) as listed below:

- Manual Tests are more expensive and time consuming

- Manual Testing becomes mundane and boring

- Manual Tests are not reusable

- Manual Tests provide limited visibility and have to be repeated by all Stakeholders

- Automated Tests (Checks) can have varying scopes and may require less complex setup and teardown

- Automated Testing ensures repeatability (missing out)

- Automated Testing drives cleaner design

- Automated Tests provide a safety net for refactoring

- Automated Tests are living up-to-date specification document

- Automated Tests dose not clutter your code/console/logs

Posted in Agile, Testing | No Comments »

Tuesday, November 1st, 2011

“Release Early, Release Often” is a proven mantra, but what happens when you push this practice to it’s limits? .i.e. deploying latest code changes to the production servers every time a developer checks-in code?

At Industrial Logic, developers are deploying code dozens of times a day, rapidly responding to their customers and reducing their “code inventory”.

This talk will demonstrate our approach, deployment architecture, tools and culture needed for CD and how at Industrial Logic, we gradually got there.

Process/Mechanics

This will be a 60 mins interactive talk with a demo. Also has a small group activity as an icebreaker.

Key takeaway: When we started about 2 years ago, it felt like it was a huge step to achieve CD. Almost a all or nothing. Over the next 6 months we were able to break down the problem and achieve CD in baby steps. I think that approach we took to CD is a key take away from this session.

Talk Outline

- Context Setting: Need for Continuous Integration (3 mins)

- Next steps to CI (2 mins)

- Intro to Continuous Deployment (5 mins)

- Demo of CD at Freeset (for Content Delivery on Web) (10 mins) – a quick, live walk thru of how the deployment and servers are set up

- Benefits of CD (5 mins)

- Demo of CD for Industrial Logic’s eLearning (15 mins) – a detailed walk thru of our evolution and live demo of the steps that take place during our CD process

- Zero Downtime deployment (10 mins)

- CD’s Impact on Team Culture (5 mins)

- Q&A (5 mins)

Target Audience

- CTO

- Architect

- Tech Lead

- Developers

- Operations

Context

Industrial Logic’s eLearning context? number of changes, developers, customers , etc…?

Industrial Logic’s eLearning has rich multi-media interactive content delivered over the web. Our eLearning modules (called Albums) has pictures & text, videos, quizes, programming exercises (labs) in 5 different programming languages, packing system to validate & produce the labs, plugins for different IDEs on different platforms to record programming sessions, analysis engine to score student’s lab work in different languages, commenting system, reporting system to generate different kind of student reports, etc.

We have 2 kinds of changes, eLearning platform changes (requires updating code or configuration) or content changes (either code or any other multi-media changes.) This is managed by 5 distributed contributors.

On an average we’ve seen about 12 check-ins per day.

Our customers are developers, managers and L&D teams from companies like Google, GE Energy, HP, EMC, Philips, and many other fortune 100 companies. Our customers have very high expectations from our side. We have to demonstrate what we preach.

Learning outcomes

- General Architectural considerations for CD

- Tools and Cultural change required to embrace CD

- How to achieve Zero-downtime deploys (including databases)

- How to slice work (stories) such that something is deployable and usable very early on

- How to build different visibility levels such that new/experimental features are only visible to subset of users

- What Delivery tests do

- You should walk away with some good ideas of how your company can practice CD

Slides from Previous Talks

Posted in Agile, Continuous Deployment, Deployment, Lean Startup, Product Development, Testing, Tools | No Comments »

Tuesday, November 1st, 2011

Recently we realized that our server logs were showing ‘null’ for all HTTP request parameters values.

SEVERE: Attempting to get user with null userName

parameters=[version:null][sessionId:null][userAgent:null][requestedUrl:null][queryString:null]

[action:null][session:null][userIPAddress:null][year:null][path:null][user:null] |

SEVERE: Attempting to get user with null userName

parameters=[version:null][sessionId:null][userAgent:null][requestedUrl:null][queryString:null]

[action:null][session:null][userIPAddress:null][year:null][path:null][user:null] On digging around a bit, we found the following buggy code in our custom Map class, which was used to hold the request parameters:

@Override

public String toString() {

StringBuilder parameters = new StringBuilder();

for (Map.Entry<String, Object> entry : entrySet())

parameters.append("[")

.append(entry.getKey())

.append(":")

.append(get(entry.getValue()))

.append("]");

return parameters.toString();

} |

@Override

public String toString() {

StringBuilder parameters = new StringBuilder();

for (Map.Entry<String, Object> entry : entrySet())

parameters.append("[")

.append(entry.getKey())

.append(":")

.append(get(entry.getValue()))

.append("]");

return parameters.toString();

} When we found this, many team members’ reaction was:

If the author had written unit tests, this bug would have been caught immediately.

Others responded saying:

But we usually don’t write tests for toString(), Getters and Setters. We pragmatically choose when to invest in unit tests.

As all of this was taking place, I was wondering, why in the first place, the author even wrote this code? As you can see from the following snippet, Maps already know how to print themselves.

@Test

public void mapKnowsHowToPrintItself() {

Map hashMap = new HashMap();

hashMap.put("Key1", "Value1");

hashMap.put("Key2", "Value2");

System.out.println(hashMap);

} |

@Test

public void mapKnowsHowToPrintItself() {

Map hashMap = new HashMap();

hashMap.put("Key1", "Value1");

hashMap.put("Key2", "Value2");

System.out.println(hashMap);

} Output: {Key2=Value2, Key1=Value1} |

Output: {Key2=Value2, Key1=Value1} Its easy to fall into the trap of first writing useless code and then defending it by writing more useless tests for it.

I’m a lazy developer and I always strive real hard to write as little code as possible. IMHO real power and simplicity comes from less code, not more.

Posted in Agile, Programming, Testing | No Comments »

Tuesday, November 1st, 2011

Every single line of code must be unit tested!

This sound advice rather seems quite extreme to me. IMHO a skilled programmer pragmatically decides when to invest in unit testing.

After practicing (automated) unit testing for over a decade, I’m a strong believer and proponent of automated unit testing. My take on why developers should care about Unit Testing and TDD.

However over the years I’ve realized that automated unit tests do have four, very important, costs associated with them:

- Cost of writing the unit tests in the first place

- Cost of running the unit tests regularly to get feedback

- Cost of maintaining and updating the unit tests as and when required

- Cost of understanding other’s unit tests

One also starts to recognize some other subtle costs associated with unit testing:

- Illusion of safety: While unit tests gives you a great safety net, at times, it can also create an illusion of safety leading to developers too heavily relying on just unit tests (possibly doing more harm than good.)

- Opportunity cost: If I did not invest in this test, what else could I have done in that time? Flip side of this argument is the opportunity cost of repetitive manually testing or even worse not testing at all.

- Getting in the way: While unit tests help you drive your design, at times, they do get in the way of refactoring. Many times, I’ve refrained from refactoring the code because I get intimidated by the sheer effort of refactor/rewrite a large number of my tests as well. (I’ve learned many patterns to reduce this pain over the years, but the pain still exists.)

- Obscures a simpler design: Many times, I find myself so engrossed in my tests and the design it leads to, that I become ignorant to a better, more simpler design. Also sometimes half-way through, even if I realize that there might be an alternative design, because I’ve already invested in a solution (plus all its tests), its harder to throw away the code. In retrospect this always seems like a bad choice.

If we consider all these factors, would you agree with me that:

Automated unit testing is extremely important, but each developer has to make a conscious, pragmatic decision when to invest in unit testing.

Its easy to say always write unit tests, but it takes years of first-hand experience to judge where to draw the line.

Posted in Agile, Design, Learning, Programming, Testing | 4 Comments »

Tuesday, November 1st, 2011

Why should developers care of automated unit tests?

- Keeps you out of the (time hungry) debugger!

- Reduces bugs in new features and in existing features

- Reduces the cost of change

- Improves design

- Encourages refactoring

- Builds a safety net to defend against other programmers

- Is fun

- Forces you to slow down and think

- Speeds up development by eliminating waste

- Reduces fear

And how TDD takes it to the next level?

- Improves productivity by

- minimizing time spent debugging

- reduces the need for manual (monkey) checking by developers and tester

- helping developers to maintain focus

- reduce wastage: hand overs

- Improves communication

- Creating living, up-to-date specification

- Communicate design decisions

- Learning: listen to your code

- Baby steps: slow down and think

- Improves confidence

- Testable code by design + safety net

- Loosely-coupled design

- Refactoring

Posted in Agile, Design, Programming, Testing | No Comments »

|