`

| |

|

Sunday, July 8th, 2012

| A |

Acceptance Criteria/Test, Automation, A/B Testing, Adaptive Planning, Appreciative inquiry |

| B |

Backlog, Business Value, Burndown, Big Visible Charts, Behavior Driven Development, Bugs, Build Monkey, Big Design Up Front (BDUF) |

| C |

Continuous Integration, Continuous Deployment, Continuous Improvement, Celebration, Capacity Planning, Code Smells, Customer Development, Customer Collaboration, Code Coverage, Cyclomatic Complexity, Cycle Time, Collective Ownership, Cross functional Team, C3 (Complexity, Coverage and Churn), Critical Chain |

| D |

Definition of Done (DoD)/Doneness Criteria, Done Done, Daily Scrum, Deliverables, Dojos, Drum Buffer Rope |

| E |

Epic, Evolutionary Design, Energized Work, Exploratory Testing |

| F |

Flow, Fail-Fast, Feature Teams, Five Whys |

| G |

Grooming (Backlog) Meeting, Gemba |

| H |

Hungover Story |

| I |

Impediment, Iteration, Inspect and Adapt, Informative Workspace, Information radiator, Immunization test, IKIWISI (I’ll Know It When I See It) |

| J |

Just-in-time |

| K |

Kanban, Kaizen, Knowledge Workers |

| L |

Last responsible moment, Lead time, Lean Thinking |

| M |

Minimum Viable Product (MVP), Minimum Marketable Features, Mock Objects, Mistake Proofing, MOSCOW Priority, Mindfulness, Muda |

| N |

Non-functional Requirements, Non-value add |

| O |

Onsite customer, Opportunity Backlog, Organizational Transformation, Osmotic Communication |

| P |

Pivot, Product Discovery, Product Owner, Pair Programming, Planning Game, Potentially shippable product, Pull-based-planning, Predictability Paradox |

| Q |

Quality First, Queuing theory |

| R |

Refactoring, Retrospective, Reviews, Release Roadmap, Risk log, Root cause analysis |

| S |

Simplicity, Sprint, Story Points, Standup Meeting, Scrum Master, Sprint Backlog, Self-Organized Teams, Story Map, Sashimi, Sustainable pace, Set-based development, Service time, Spike, Stakeholder, Stop-the-line, Sprint Termination, Single Click Deploy, Systems Thinking, Single Minute Setup, Safe Fail Experimentation |

| T |

Technical Debt, Test Driven Development, Ten minute build, Theme, Tracer bullet, Task Board, Theory of Constraints, Throughput, Timeboxing, Testing Pyramid, Three-Sixty Review |

| U |

User Story, Unit Tests, Ubiquitous Language, User Centered Design |

| V |

Velocity, Value Stream Mapping, Vision Statement, Vanity metrics, Voice of the Customer, Visual controls |

| W |

Work in Progress (WIP), Whole Team, Working Software, War Room, Waste Elimination |

| X |

xUnit |

| Y |

YAGNI (You Aren’t Gonna Need It) |

| Z |

Zero Downtime Deployment, Zen Mind |

Posted in Agile | No Comments »

Tuesday, November 1st, 2011

Recently we realized that our server logs were showing ‘null’ for all HTTP request parameters values.

SEVERE: Attempting to get user with null userName

parameters=[version:null][sessionId:null][userAgent:null][requestedUrl:null][queryString:null]

[action:null][session:null][userIPAddress:null][year:null][path:null][user:null] |

SEVERE: Attempting to get user with null userName

parameters=[version:null][sessionId:null][userAgent:null][requestedUrl:null][queryString:null]

[action:null][session:null][userIPAddress:null][year:null][path:null][user:null] On digging around a bit, we found the following buggy code in our custom Map class, which was used to hold the request parameters:

@Override

public String toString() {

StringBuilder parameters = new StringBuilder();

for (Map.Entry<String, Object> entry : entrySet())

parameters.append("[")

.append(entry.getKey())

.append(":")

.append(get(entry.getValue()))

.append("]");

return parameters.toString();

} |

@Override

public String toString() {

StringBuilder parameters = new StringBuilder();

for (Map.Entry<String, Object> entry : entrySet())

parameters.append("[")

.append(entry.getKey())

.append(":")

.append(get(entry.getValue()))

.append("]");

return parameters.toString();

} When we found this, many team members’ reaction was:

If the author had written unit tests, this bug would have been caught immediately.

Others responded saying:

But we usually don’t write tests for toString(), Getters and Setters. We pragmatically choose when to invest in unit tests.

As all of this was taking place, I was wondering, why in the first place, the author even wrote this code? As you can see from the following snippet, Maps already know how to print themselves.

@Test

public void mapKnowsHowToPrintItself() {

Map hashMap = new HashMap();

hashMap.put("Key1", "Value1");

hashMap.put("Key2", "Value2");

System.out.println(hashMap);

} |

@Test

public void mapKnowsHowToPrintItself() {

Map hashMap = new HashMap();

hashMap.put("Key1", "Value1");

hashMap.put("Key2", "Value2");

System.out.println(hashMap);

} Output: {Key2=Value2, Key1=Value1} |

Output: {Key2=Value2, Key1=Value1} Its easy to fall into the trap of first writing useless code and then defending it by writing more useless tests for it.

I’m a lazy developer and I always strive real hard to write as little code as possible. IMHO real power and simplicity comes from less code, not more.

Posted in Agile, Programming, Testing | No Comments »

Tuesday, November 1st, 2011

Every single line of code must be unit tested!

This sound advice rather seems quite extreme to me. IMHO a skilled programmer pragmatically decides when to invest in unit testing.

After practicing (automated) unit testing for over a decade, I’m a strong believer and proponent of automated unit testing. My take on why developers should care about Unit Testing and TDD.

However over the years I’ve realized that automated unit tests do have four, very important, costs associated with them:

- Cost of writing the unit tests in the first place

- Cost of running the unit tests regularly to get feedback

- Cost of maintaining and updating the unit tests as and when required

- Cost of understanding other’s unit tests

One also starts to recognize some other subtle costs associated with unit testing:

- Illusion of safety: While unit tests gives you a great safety net, at times, it can also create an illusion of safety leading to developers too heavily relying on just unit tests (possibly doing more harm than good.)

- Opportunity cost: If I did not invest in this test, what else could I have done in that time? Flip side of this argument is the opportunity cost of repetitive manually testing or even worse not testing at all.

- Getting in the way: While unit tests help you drive your design, at times, they do get in the way of refactoring. Many times, I’ve refrained from refactoring the code because I get intimidated by the sheer effort of refactor/rewrite a large number of my tests as well. (I’ve learned many patterns to reduce this pain over the years, but the pain still exists.)

- Obscures a simpler design: Many times, I find myself so engrossed in my tests and the design it leads to, that I become ignorant to a better, more simpler design. Also sometimes half-way through, even if I realize that there might be an alternative design, because I’ve already invested in a solution (plus all its tests), its harder to throw away the code. In retrospect this always seems like a bad choice.

If we consider all these factors, would you agree with me that:

Automated unit testing is extremely important, but each developer has to make a conscious, pragmatic decision when to invest in unit testing.

Its easy to say always write unit tests, but it takes years of first-hand experience to judge where to draw the line.

Posted in Agile, Design, Learning, Programming, Testing | 4 Comments »

Tuesday, February 1st, 2011

As more and more companies are moving to the Cloud, they want their latest, greatest software features to be available to their users as quickly as they are built. However there are several issues blocking them from moving ahead.

One key issue is the massive amount of time it takes for someone to certify that the new feature is indeed working as expected and also to assure that the rest of the features will continuing to work. In spite of this long waiting cycle, we still cannot assure that our software will not have any issues. In fact, many times our assumptions about the user’s needs or behavior might itself be wrong. But this long testing cycle only helps us validate that our assumptions works as assumed.

How can we break out of this rut & get thin slices of our features in front of our users to validate our assumptions early?

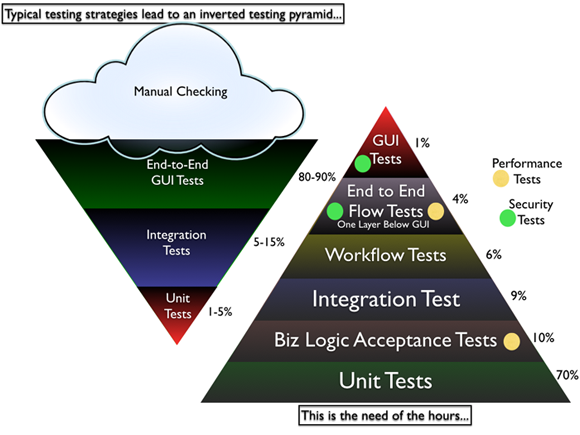

Most software organizations today suffer from what I call, the “Inverted Testing Pyramid” problem. They spend maximum time and effort manually checking software. Some invest in automation, but mostly building slow, complex, fragile end-to-end GUI test. Very little effort is spent on building a solid foundation of unit & acceptance tests.

This over-investment in end-to-end tests is a slippery slope. Once you start on this path, you end up investing even more time & effort on testing which gives you diminishing returns.

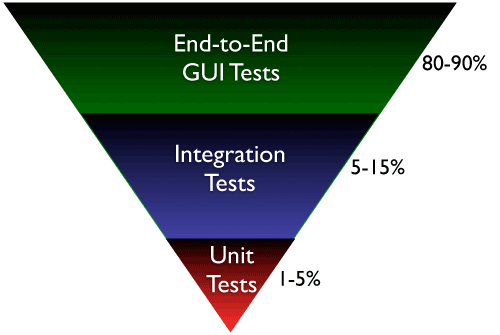

They end up with majority (80-90%) of their tests being end-to-end GUI tests. Some effort is spent on writing so-called “Integration test” (typically 5-15%.) Resulting in a shocking 1-5% of their tests being unit/micro tests.

Why is this a problem?

- The base of the pyramid is constructed from end-to-end GUI test, which are famous for their fragility and complexity. A small pixel change in the location of a UI component can result in test failure. GUI tests are also very time-sensitive, sometimes resulting in random failure (false-negative.)

- To make matters worst, most teams struggle automating their end-to-end tests early on, which results in huge amount of time spent in manual regression testing. Its quite common to find test teams struggling to catch up with development. This lag causes many other hard-development problems.

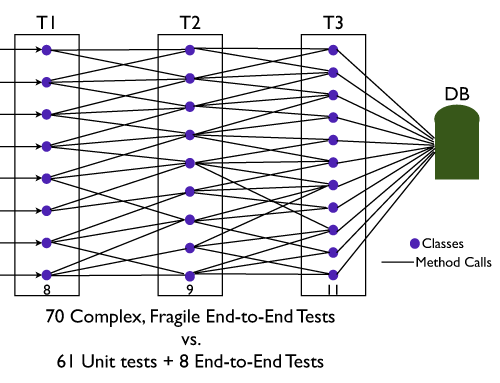

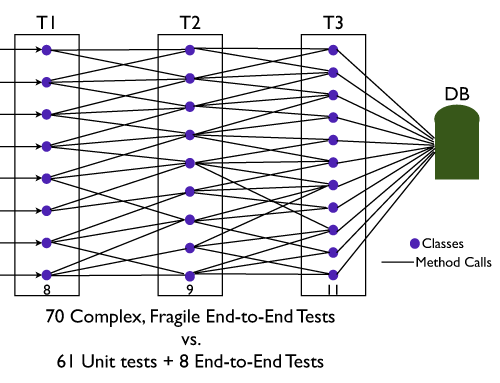

- Number of end-to-end tests required to get a good coverage is much higher and more complex than the number of unit tests + selected end-to-end tests required. (BEWARE: Don’t be Seduced by Code Coverage Numbers)

- Maintain a large number of end-to-end tests is quite a nightmare for teams. Following are some core issues with end-to-end tests:

- It requires deep domain knowledge and high technical skills to write quality end-to-end tests.

- They take a lot of time to execute.

- They are relatively resource intensive.

- Testing negative paths in end-to-end tests is very difficult (or impossible) compared to lower level tests.

- When an end-to-end test fails, we don’t get pin-pointed feedback about what went wrong.

- They are more tightly coupled with the environment and have external dependencies, hence fragile. Slight changes to the environment can cause the tests to fail. (false-negative.)

- From a refactoring point of view, they don’t give the same comfort feeling to developers as unit tests can give.

Again don’t get me wrong. I’m not suggesting end-to-end integration tests are a scam. I certainly think they have a place and time.

Imagine, an automobile company building an automobile without testing/checking the bolts, nuts all the way up to the engine, transmission, breaks, etc. And then just assembling the whole thing somehow and asking you to drive it. Would you test drive that automobile? But you will see many software companies using this approach to building software.

What I propose and help many organizations achieve is the right balance of end-to-end tests, acceptance tests and unit tests. I call this “Inverting the Testing Pyramid.” [Inspired by Jonathan Wilson’s book called Inverting The Pyramid: The History Of Football Tactics].

In a later blog post I can quickly highlight various tactics used to invert the pyramid.

Update: I recently came across Alister Scott’s blog on Introducing the software testing ice-cream cone (anti-pattern). Strongly suggest you read it.

Posted in Agile, Design, Testing | 14 Comments »

Sunday, September 11th, 2005

I usually categorise my tests in these 6 types:

- Unit Tests – Test each unit (class [OO], functional [Functional Programming]) in isolation. Written by Devs.

- Domain Logic Acceptance Tests – Tests across the layers, but more focused on validating the core business logic. Written by Customers/Business Analyst and Testers, with the help of Devs. External Dependencies are stubbed out.

- Integration Tests – Validates if our code can correctly talk to external (3rd party) dependencies. Written by Devs.

- Workflow Tests – Validates if the user can successfully complete a workflow at API or Service layer (Ex: New user buys an online ticket for a conference using Credit Card [1. ticket selection, 2. enter discount code, 3. account sign-in, 4. online payment, 5. attendee’s information and 6. final confirmation].) Here we stub out external dependencies like payment gateway, email notification, etc. Written by Domain Experts, Devs and Testers.

- End-to-End Flow Tests – Same as above, with 2 main differences: 1. Flow is written from a UX point of view, not API point of view. 2. Does not stub external dependencies. These tests don’t drive the UI, they operate one layer below the UI. Written by Domain Experts, Devs and Testers.

- UI Tests – Validates the navigation in the UI. Mostly used for sanity testing and important in cases of Multi-lingual support. Written by Testers.

More details: Inverting the Testing Pyramid

Posted in Agile, Testing | No Comments »

|